Quick Summary

Integrated voice recognition is no longer “nice to have.” In 2026, it’s one of the baseline capabilities clinicians expect from modern documentation tools.

What matters is how voice recognition is integrated:

- Dictation tools convert clinician speech to text, but still require heavy editing and formatting.

- AI medical scribes use voice recognition plus clinical language understanding to draft structured notes from the visit.

- AI charting + workflow platforms combine voice input with templated charting and automation.

For any option, the safety rule is the same: voice recognition output must be treated as a draft and reviewed by the clinician.

What is integrated voice recognition?

Integrated voice recognition refers to speech-to-text that is built directly into a clinical documentation workflow.

Instead of recording audio in one tool and writing notes in another, integrated voice recognition supports documentation as part of the visit workflow:

- capturing the clinician’s spoken narrative (dictation) and/or the clinician–patient conversation

- converting speech into text in near real time

- supporting clinical documentation steps (structure, sections, templates, summaries)

The key word is integrated: the value comes from reducing context-switching and minimizing the “admin tail” after each encounter.

Why integrated voice recognition matters in medical documentation

Documentation burden is not just about typing speed. It’s about:

- time lost after visits

- inconsistencies between providers

- missing details when clinics run behind

- delayed chart completion that spills into evenings

Integrated voice recognition helps by moving documentation closer to the point of care.

1) Real-time drafting (when done responsibly)

Tools that support real-time drafting can produce a usable draft during or immediately after a visit.

Related reading: Real-time AI medical scribe in 2026

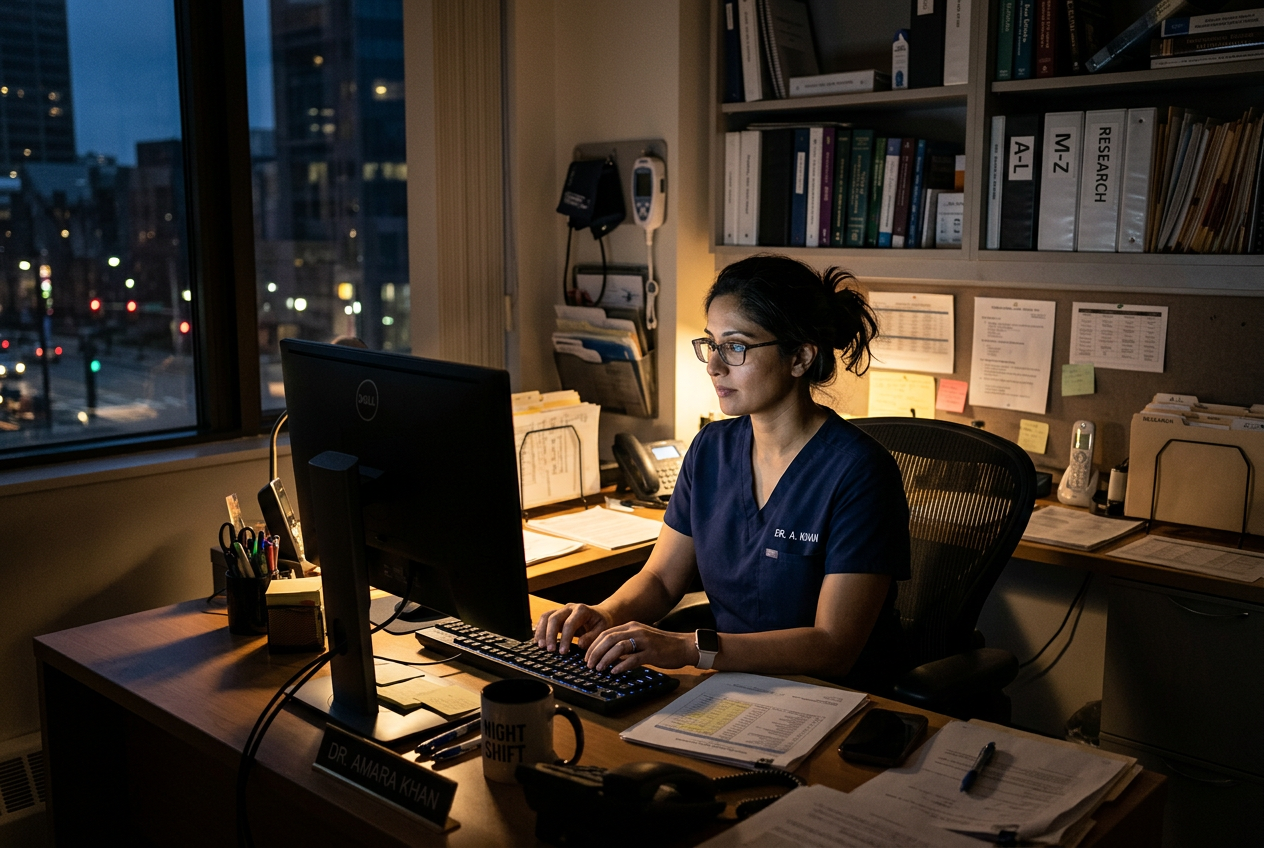

2) Burnout reduction (through less after-hours charting)

Reducing after-hours documentation is one of the most common reasons clinicians evaluate voice-enabled documentation.

3) Better structure than plain transcription

Modern clinical documentation tools aim to produce structured drafts (for example, SOAP-style organization) rather than raw transcripts.

Related reading: AI-generated doctors’ notes in 2026

The 3 service types that deliver integrated voice recognition

Not all “voice recognition” products solve the same problem. In 2026, most tools fall into one of three categories.

1) Real-time AI medical scribe platforms

These platforms combine:

- integrated voice recognition

- clinical language understanding

- draft note generation (structured sections)

- clinician review and editing before finalization

This is the category most clinics mean when they say “AI scribe.”

If multilingual documentation matters in your clinic, prioritize platforms that support consistent output across languages.

Related reading: Multilingual medical transcription AI

2) Voice dictation tools for clinicians

Dictation tools focus on converting the clinician’s speech to text.

They can help in high-volume workflows, but the tradeoff is usually:

- more manual editing

- more formatting work

- less automatic structuring of clinical notes

If you’re deciding between approaches, start here:

Related reading: Dictation vs transcription in healthcare

3) AI charting platforms with speech recognition

These tools combine voice recognition with charting templates and workflow automation.

They can be useful when your clinic needs structured charting, task automation, and standardized output across providers.

Related reading: AI medical charting in multilingual clinics

Clinical workflow reality: where voice recognition helps and where it fails

Integrated voice recognition performs best when it reduces friction without changing how clinicians practice.

A realistic workflow usually looks like this:

- Clinician conducts the visit normally (no “scripted AI conversation”).

- The tool captures relevant speech (dictation and/or visit conversation).

- A draft note is generated.

- Clinician performs a short safety review:

- confirm objective measures and key negatives

- confirm medication names, doses, and instructions (if relevant)

- confirm diagnoses and plan are documented accurately

- confirm follow-up steps and risk items are captured

- Clinician finalizes the note and stores it in the clinic record system.

Where voice recognition often struggles (and requires extra review):

- noisy environments

- accents, speech overlap, or rapid back-and-forth dialogue

- medication names and dosages

- complex multi-problem visits

- sensitive or high-risk discussions

Practical safeguard rule: If it affects the chart, it must be reviewable, editable, and clinician-approved.

Safety and accuracy: what clinicians should assume

Voice recognition is useful, but it is not perfect.

Across the healthcare literature on speech recognition and dictated documentation, errors are consistently reported in real-world use, and editing/review remains necessary.

Operational takeaway: Choose a system that makes review fast and obvious (draft vs final), rather than one that optimizes for “hands-free auto-finalization.”

Security and compliance considerations (non-negotiables)

Before using any voice-enabled documentation tool, clinics should confirm:

- where audio and text are stored

- encryption in transit and at rest

- retention and deletion policies

- who can access data (role-based controls)

- whether the tool supports your jurisdiction’s privacy expectations

Related reading: Is AI transcription safe in healthcare?

What to look for in an AI medical scribe with integrated voice recognition

Use this checklist when evaluating tools.

1) Clinician control (draft ≠ final)

The tool should make it impossible to confuse drafts with finalized records.

2) Clinical context support

Speech-to-text alone is not enough. The platform should handle clinical structure and terminology without inventing details.

3) Editing speed and traceability

You should be able to quickly find and correct:

- medication terms

- measurements

- key negatives

- plan details

4) Workflow fit

The best system is the one your clinicians will actually use daily.

5) Language and accessibility needs

If you serve multilingual populations, test real visits in your common languages.

6) Privacy posture that matches healthcare use

If the vendor cannot clearly answer security/retention questions, do not proceed.

How integrated voice recognition fits into broader workflow automation

Voice recognition becomes more valuable when it reduces downstream admin tasks:

- closing charts sooner

- reducing “missing note” follow-ups

- minimizing handoffs between clinician and staff

Related reading: Healthcare workflow automation gaps

Ready to evaluate AI documentation tools?

If you’re choosing between tools, keep the evaluation grounded in workflow outcomes:

- Will it reduce after-hours charting?

- Will it improve note consistency and completeness?

- Can clinicians review and finalize safely?

- Does it fit your clinic’s privacy expectations?

For clinicians exploring AI documentation more broadly:

Found an error or want an update? Email help@dorascribe.com and we’ll review it.

FAQ: Integrated Voice Recognition in Healthcare (2026)

What does “integrated voice recognition” mean in healthcare?

It means speech-to-text is built into the documentation workflow (not a separate dictation step), supporting draft notes and charting processes during or immediately after the visit.

Is integrated voice recognition the same as an AI medical scribe?

Not always. Dictation tools can have integrated voice recognition, but AI medical scribes typically add clinical language understanding and note structuring from the encounter.

Do clinicians still need to review voice recognition output?

Yes. Speech recognition and AI-generated drafts should be treated as drafts. Clinician review and editing remain essential for accuracy and safety.

What’s the biggest risk with voice recognition documentation?

Errors in clinical terms, medication names, and context. Tools must make review simple and obvious, and clinics should avoid any workflow that bypasses clinician final approval.

Does voice recognition work well in busy clinics?

It can, but performance depends on environment (noise), speaker overlap, and visit complexity. Clinics should test real conditions before rolling out broadly.

What should I ask a vendor before adopting a voice-enabled documentation tool?

Ask about data storage, encryption, retention/deletion policies, access controls, audit logs, and how drafts are reviewed and finalized.

What’s the difference between dictation, transcription, and AI scribing?

Dictation converts clinician speech to text. Transcription converts audio to text (often after the visit). AI scribing aims to draft structured notes from the encounter, usually with additional automation.

Evidence & Sources

- Johnson M, et al. A systematic review of speech recognition technology in healthcare. 2014. (JMIR / PubMed Central)

- Blackley SV, et al. Speech recognition for clinical documentation from 1990 to 2018: review and analysis. 2019. (Journal of the American Medical Informatics Association / PubMed Central)

- Kumah-Crystal YA, et al. Electronic Health Record Interactions through Voice: A Review. 2018. (Applied Clinical Informatics / PubMed Central)

- Zhou L, et al. Analysis of errors in dictated clinical documents assisted by speech recognition. 2018. (JAMA Network Open)

- Olson KD, et al. Use of ambient AI scribes to reduce administrative burden and professional burnout. 2025. (JAMA Network Open)